Dev environment for agentic coding

Standardized dev environment for the agent coding era is how you get multiplier on top of your coding agent

I am not here to talk or praise coding agents. I am here to talk about the next multiplier which remains your dev environment. Companies hiring for AI native roles are not looking to know how many tools you know or how quickly you can prompt your favourite coding agent. They are looking how have you adapted your environment to accelerate development cycle so you can 1-shot, 2-shot more and more things, get high quality output of that and ultimately, get more stuff done better.

We all seem to increasingly forget the difference between 0 to 1, then 1 to say 80 and how much grind it takes to get 80 to 90 and then add more nines. 0 to 1 is a largely solved problem, cookie-cutters exists, 0-1 website building coding solutions exist, they pack more and more features such that the first prototype you have is more and more polished one with more features coming out of the box. And it’s great - you should use them as finding PMF is priority number one when you are building product. Always. These solutions come with some really great properties:

- nearly zero setup cost, you go to website, prompt and get a working prototype

- they follow standard practices - agents are trained on them and get to the result fast

- everything uses version control systems, some sort of database and standard connectors

What I don’t think is solved is taking the next step. You found PMF, you are getting traction, you want to hire the next 2-3 engineers to build more with the same velocity as new features are added, removed, product is morphing based on the user feedback.

What matters?

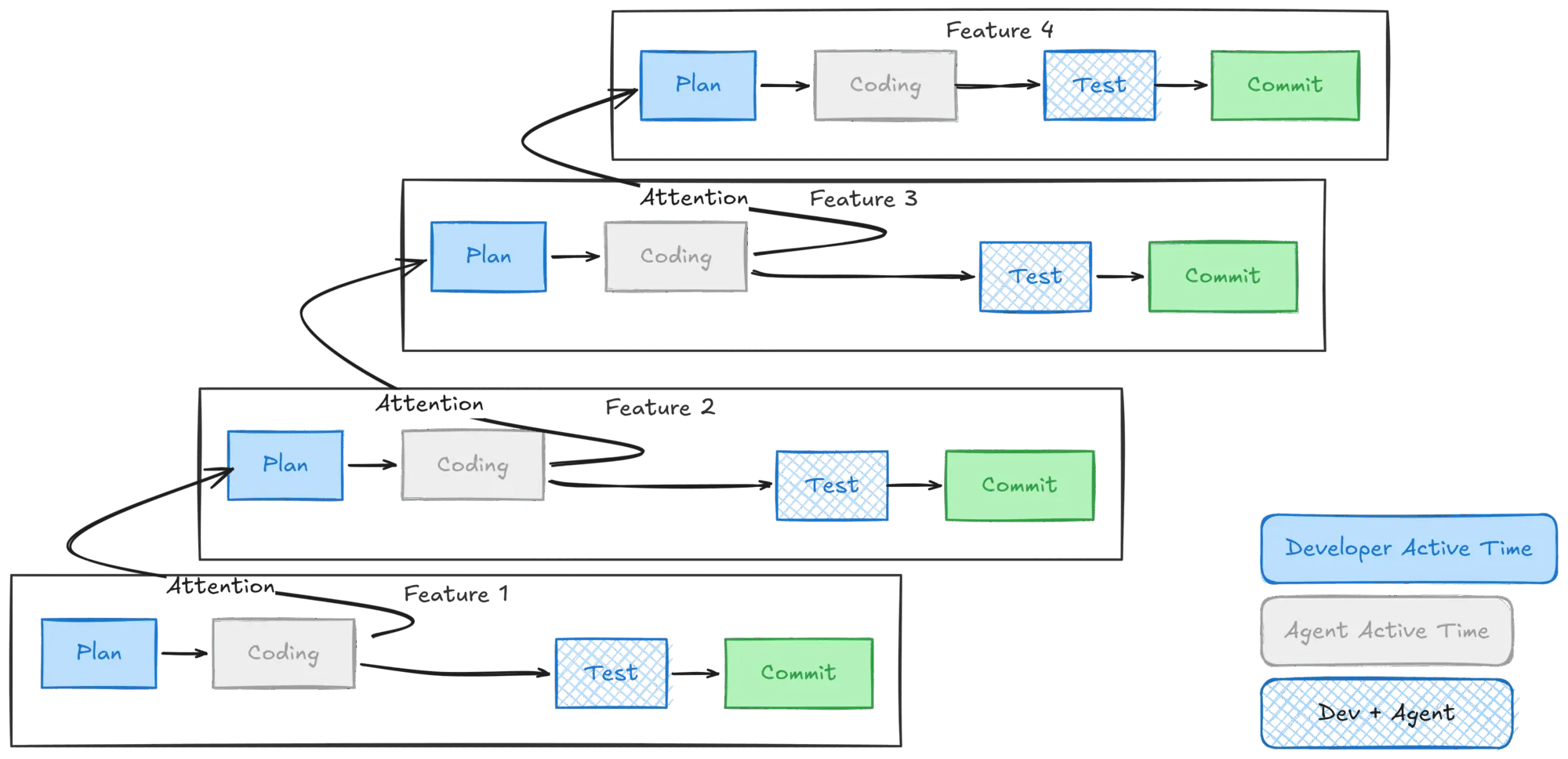

In modern dev environments we are all optimizing for velocity and quality. Increasingly my coding workflow has been trending towards something akin to CPU pipelining, I call it Attention pipelining. At a given time I can only think about one problem deeply and engage with it. I would iterate on the plan with the agent first, mostly in an interactive or semi-interactive way when agent is doing large codebase research. Then when I am happy with the plan and verification criteria, I let it code and move on to the next task: which is either planning another feature or looking at results produced by the coding agent to test and review. This keeps me sane and organized in the workflow. What I need for that though is a dev environment in which agent can run independently, I can switch between the agents fast to look at the result and test and not idle looking at agent doing things.

Velocity

For maximum velocity parallelism matters. Working on one feature at a time just does not cut it. Higher thinking levels burn more tokens which take more time. While some providers offer fast at an addition price, you are still waiting and paying a markup which at some poin is another line in the OPEX teams will be looking at. To do that you want to run multiple agents, in parallel.

Testing

Testing is what continues to eat the most time. The bigger the project and more moving pieces, testing, and especially end to end testing is where most of the time ends up going. You can ask coding agent to write tests, but the default you get is really poor: everything is mocked, everything is unit-tested but the end to end feature still does not work. Every time there is a small API change, hundreds of tests need to be updated. And while you can argue that agent can do it, it takes time - every edit is still several seconds and these add up the more test-slop you have in the codebase.

End to end testing requires running service, or multiple services at the same time, running playwright scripts for automated tests or hand clicking new features to verify whether they work.

Improvement loop

Engineers have always been optimizing their setup to make the tools to the work for them: aliases, dotfiles, color coding, utility tools for common tasks like jq, httpie, small scripts executing repeated workflows. This all stays, but now, we are also optimizing another tool - the coding agent of choice: skills, hooks, AGENTS.md, memory files, preferences, thinking levels, slash commands and more. The right engineering environment didn’t dramatically change, intead it got one more bit to optimize and distribute to all your working environments.

Security and Safety

Move fast is really winning. --dangerously-skip-permissions or Auto mode are signs of where we want to be - give agents as much power as they need to run fast autonomously, don’t ask questions as they work, so we can fully disconnect or move on to the next item and later check and validate the end outcome and steer. This is both useful for new feature development, but even more for autonomous debugging loops of production issues which require gathering information, running small tests/reproes to validate assumptions before identifying the root cause. For those, ideal scenario is enough access to the production telemetry and data to get the job done, while not allowing modifying anything in the production application. There are two aspects to that:

- running agent in a disposable sandbox with a full dev environment to be able to replicate problems

- controling, upfront, how much access to production data the agent should have

How big companies solve it?

While working at Meta, I had access to OnDemand. You spin up new dev machine, the repository is cloned, checked out on the latest stable and warm version (for build caches), you can hit facebook.com through an alias like adek-{N}.ondemand.com and have a complete sandbox ready to debug, code, test end to end. Velocity is there - spinning up a new host took less than 10 seconds. I could have few of these running, be able to test multiple features without doing any branch check-outs, simply would have adek-1.ondemand.com and adek-2.ondemand.com open in my browser to switch between testing. If something broke, happened to the machine or got to unrecoverable step, it was often faster to commit change to a global pool of ephemeral commits, get a new machine and pull the commit.

Dotfiles would be automatically mounted and making any change to it would sync them across my devboxes making aliases immediately available across my machines. Dev environment modifications would persist across sessions and would be applied to the new boxes. Everything would feel the same, no additional setup needed.

Security and data access was solved by internal firewall policies, limited secret access and using employee identities to limit data and services access.

I wanted something like that. An easy to spin up dev environment with controlled secret injection, persisted customizations, running a complete stack locally for testing with easy end to end testing path.

Ingredients

Containers

Two goals:

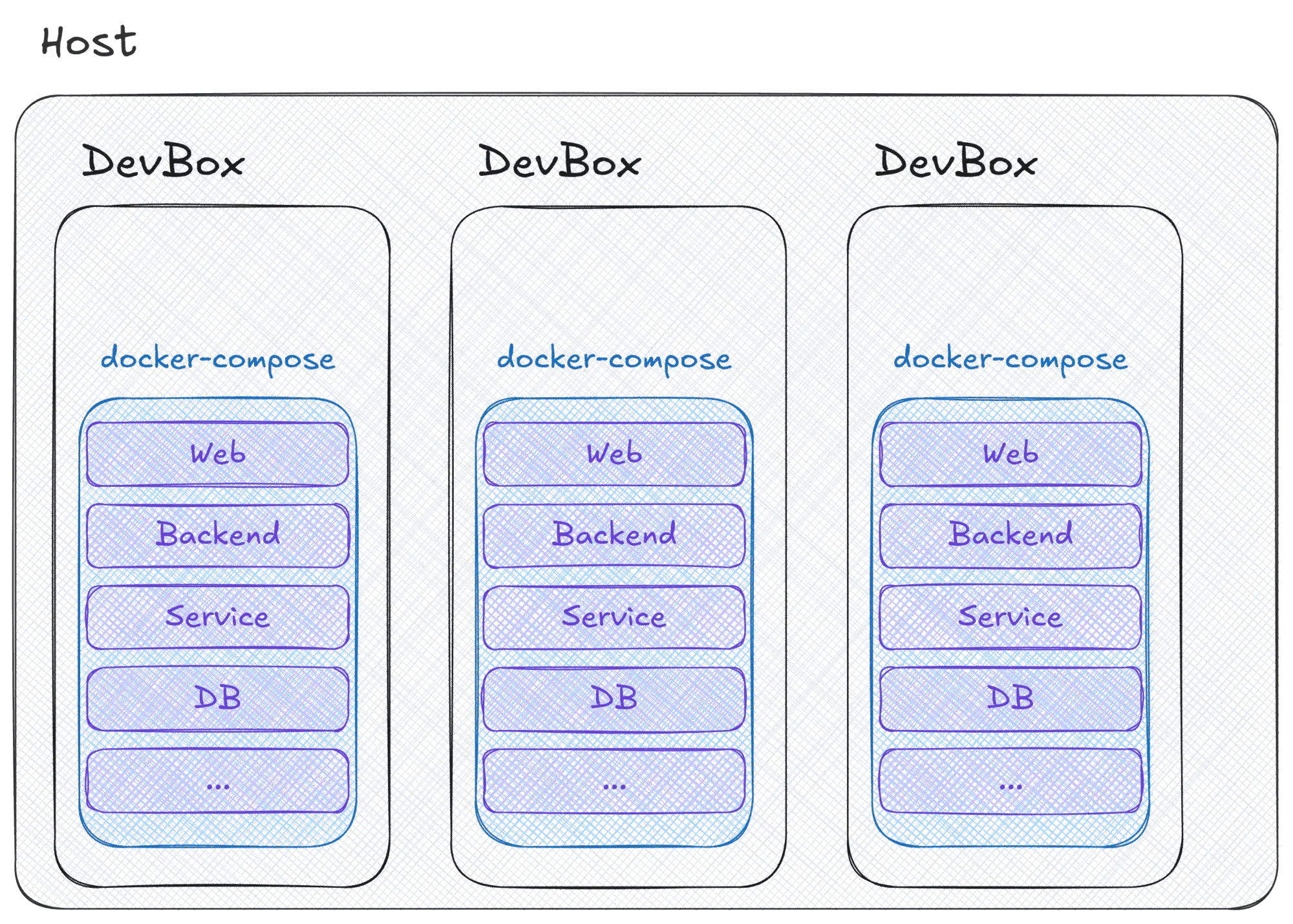

- Each dev environment should also be a container - reproducible setup, can be aligned as close as needed to the production environment, all the tools used are preinstalled and configured.

- Each application should be fully contenerized as well, so I can run it inside the development container.

Contenerizing applications today is trivial. Getting a Dockerfile for all services and creating a docker-compose for the entire local stack is trivial and takes few minutes with agents help. Then running docker compose up is all I need to have my local stack running. It is de-facto standard today and if you are not using that for you dev environment, you are missing out. Needless to say, it’s very easy to tell the agent to look for logs in the running docker containers and it will do much better job rather than instructing it to look for the logs. This is as close to the production as you will get where tasks are running in containers.

Hot reload

Pay attention to hot reload. You really don’t want to type and re-type docker compose up to trigger application rebuilds. TypeScript’s tsc --watch revolutionized workflows for front-end engineers where you want to refresh and see changes up and honestly, with agentic development you don’t want to remember or have your agent remember to rebuild your application every time it makes changes so you can test it e2e in the container. Most languages have support for hot reloading. TypeScript has the --watch, Go has air, Rust has cargo watch and frankly, today I would not pick a language which does not have a mature solution for hot reload/live coding solution. It’s just not worth my time.

Dotfiles

Dotfiles need to be versioned controlled, ideally checked in a repo from which you can pull the latest version and push updates to. Your .<agent>/ has many files which you should include in your dotfiles and then use chezmoi or dotbot to manage them.

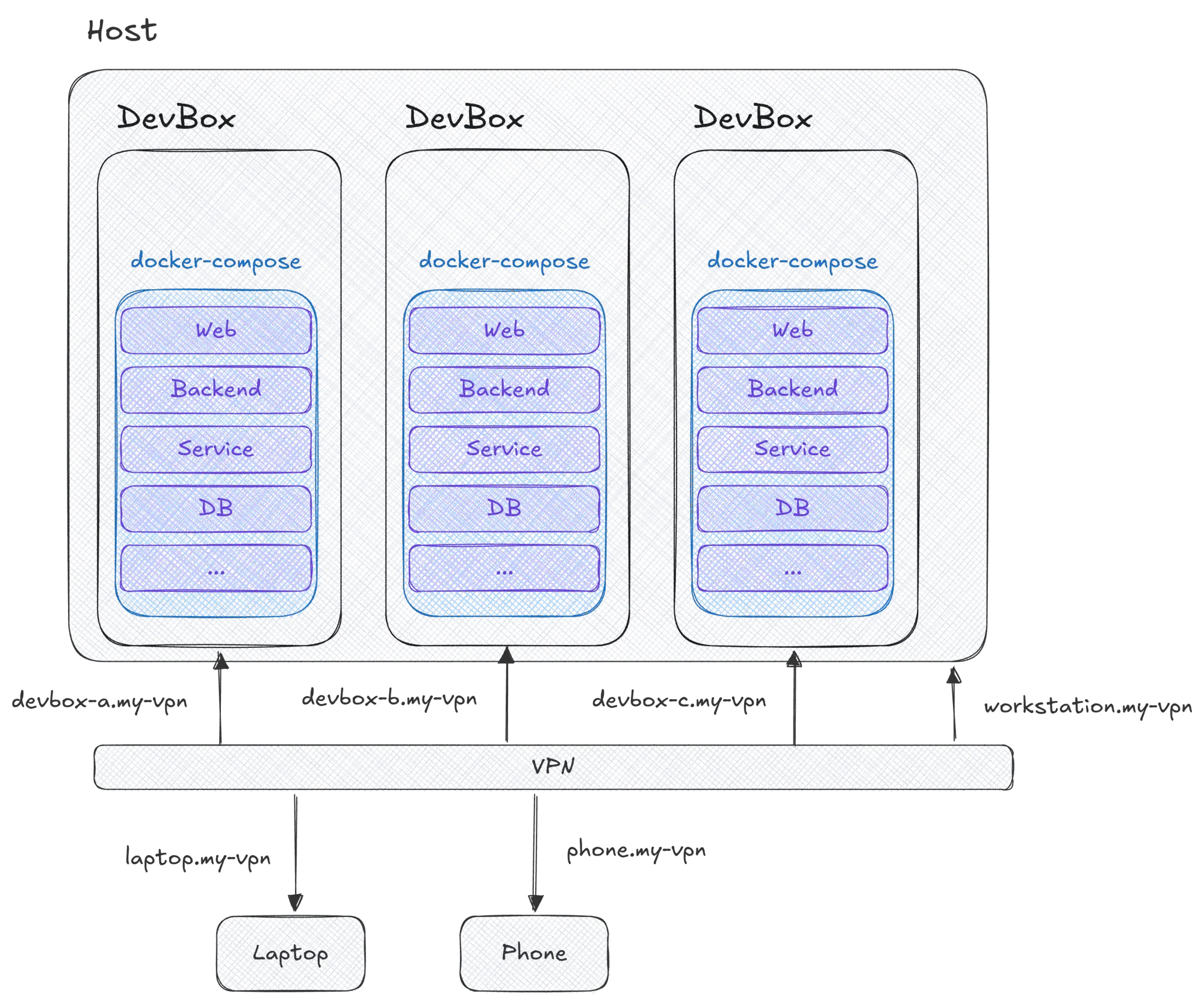

Access dev container through URL anywhere in the world

adek.ondemand.com available in the network is a real game changer. You can open it in the browser and see the website it’s serving. You can ssh easily, you can open the project in VS Code through Remote SSH feature, you can load it on your phone, you can even, optionally, open it to the world ngrok style to connect external services to it.

For me gamechanger was when I learnt about Tailscale. Tailscale is a super lightweight VPN which makes it easy to make machines part of it with almost no setup. I was chatting with @Gricha whether every container managed by perry could simply register itself in the tailnet. Once he got that up and running, I can’t really imagine living without it. All my devices: NAS, laptop, work station, phone have tailscale always running making them accessible from anywhere in the world. Having every dev container available was the next logical step. This way I can have my beefy workstation at home host all my sandboxes and I can access them by simply typing the devbox’s MagicDNS name (<devbox> short, or <devbox>.<tailnet>.ts.net fully qualified) in ssh or in the VSCode Remote SSH Connection setup.

Together with tailscale serve and tailscale funnel I can hit the same MagicDNS name in the browser to see the running website, without magic port bindings. The cost is that you have to opt each port in: I ship two thin wrappers - ts-serve and ts-funnel - plus an auto-funnel-at-boot driven by a TS_FUNNEL_PORTS=8080,3000 env var, so the common ports come up without manual tailscale invocation. The payoff is that everything runs on the same port everywhere, the same as used in production. Agent and human just change the URL: localhost, production.com or <devbox>.<tailnet>.ts.net. AGENTS.md needs to be written once, no wild guessing which port maps to which running service, unlike running it all as a git worktree, which increasingly everyone is moving away from.

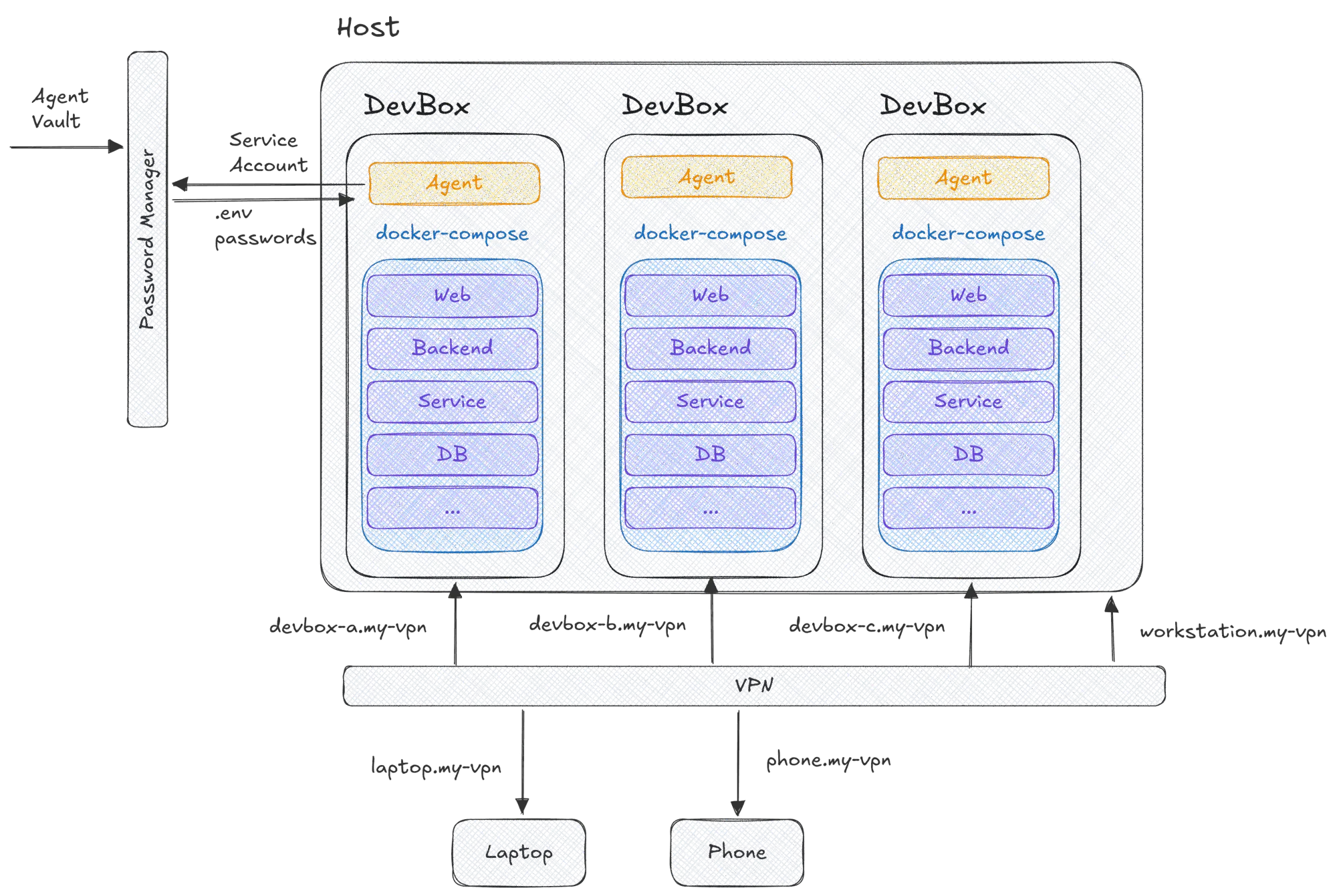

Password manager for secrets and SSH injection

Secrets are critical part of running the application locally as it may need access to APIs. .env is a real pain to manage, update, synchronize etc. Luckily, password managers are increasingly solving problems in that space making it much easier. .env files don’t have to live on disk, they can be mounted by the password manager. Passwords, API secrests which do not belong to .env can be retrieved on demand and injected into the application at the time when it needs to access them. Here the landscape is not ideal yet, but solutions are moving in the right direction. You can create service accounts with access to curated vaults which give the agent access to the keys and revoke access at any time for the given service account associated with the agent.

There is a chicken-and-egg in this: the in-container op needs an OP_SERVICE_ACCOUNT_TOKEN, but that token is itself a secret. The trick I landed on is two-stage. A host-side initialize.sh runs before docker create, reads a template.env of op://... refs (including the service-account token itself), and renders it into an env.container file using my signed-in op desktop session on the host. Docker then loads that file via --env-file, and inside the container op keeps working non-interactively against the same vaults via the service-account token it just got handed. The host stays the only place that holds an interactive 1Password identity; every devbox gets a scoped, revocable token derived from it.

With remote control solutions, what I think increasingly is needed is a first class push notification support for password managers and beyond where you can approve agent’s access to the vault from your phone or desktop while it’s running independently. There is a case already there with ssh-agent forwarding and for a connected session laptop-devcontainer, workspace-devcontainer ideal setup will be similar: for a given interactive session client’s password manager agent socket is forwarded over to the remote box and every time secret needs to be accessed, client’s approval popup is triggered for biometric or other authorization. This will expand the existing setup where the agent’s vault needs to be constantly updated and there is no room for dynamic secret injection or where explicit approval would be desired.

How it all comes together?

I zeroed in on the following:

Dockerfileto define my dev environment imagedevcontainer.jsonas a standard to declartively define the environment, mount points, additional setup scripts, VS Code extensions to be installedtailscalefor making sure each of my devcontainers is available on my VPN network and accessible anywhere in the world from workstation.1passwordfor managing access to secrets, ssh-agent forwarding and secret injection to the dev environment with a service accountdevpodto manage devcontainers on my workstation.

Now when I want to work on a new feature I spin up a new container using devpod. This loads my development image, injects secrets by running op inject into the image such that tailscale API key is there, GitHub API key is there and a few more. Then it starts tailscaled, dockerd, sshd. Host’s dotfiles are mounted as rw into each of the devboxes such that I can modify them in one and have immediately reflected in other. This makes it easy to get slash-commands etc for my agents shared immediately.

Quirks

- Make sure your image populates

/etc/environment. When you connect through ssh to the remote box, it will go through PAM which reads/etc/environmentand shell may be missing some of your configuration. - Share toolchain caches across devboxes via named volumes. Without this the “10-second new box” claim collapses on the first

go buildthat has to re-download every module. I mount a set of named volumes —devbox-go-mod,devbox-go-build,devbox-bun-cache,devbox-npm-cache,devbox-uv-cache,devbox-cargo-registry,devbox-cargo-git— at the right paths inside/home/dev, so every devbox on the host hits a warm cache from the first command. They auto-seed from the image on first mount, so toolchain rebuilds propagate without any manual seeding. - Run a host-side OCI registry as a shared buildx cache. A single

registrycontainer, reachable from every devbox ashost.docker.internal:5050, backs--cache-from/--cache-to=type=registry. I wrapdocker build,docker buildx build, anddocker compose build/up/run/createin a shell function that auto-injects the cache flags so I never have to remember them; compose builds get a per-service ref (devbox-cache/<service>) so parallel manifest writes don’t collide. External image pulls aren’t shared (each DinD has its own image store) — the cache only helps builds. - Passwordless sudo for the in-container

devuser. This isn’t sloppiness — it’s the thing that makes--dangerously-skip-permissionsactually mean what the name says. Sudo prompts in the middle of an unattended agent run defeat the whole point of “disconnect and let it run.” The blast radius is bounded by the container, the per-devbox docker volume, and the 1Password service-account scope, so unattended root inside this sandbox is the right tradeoff.